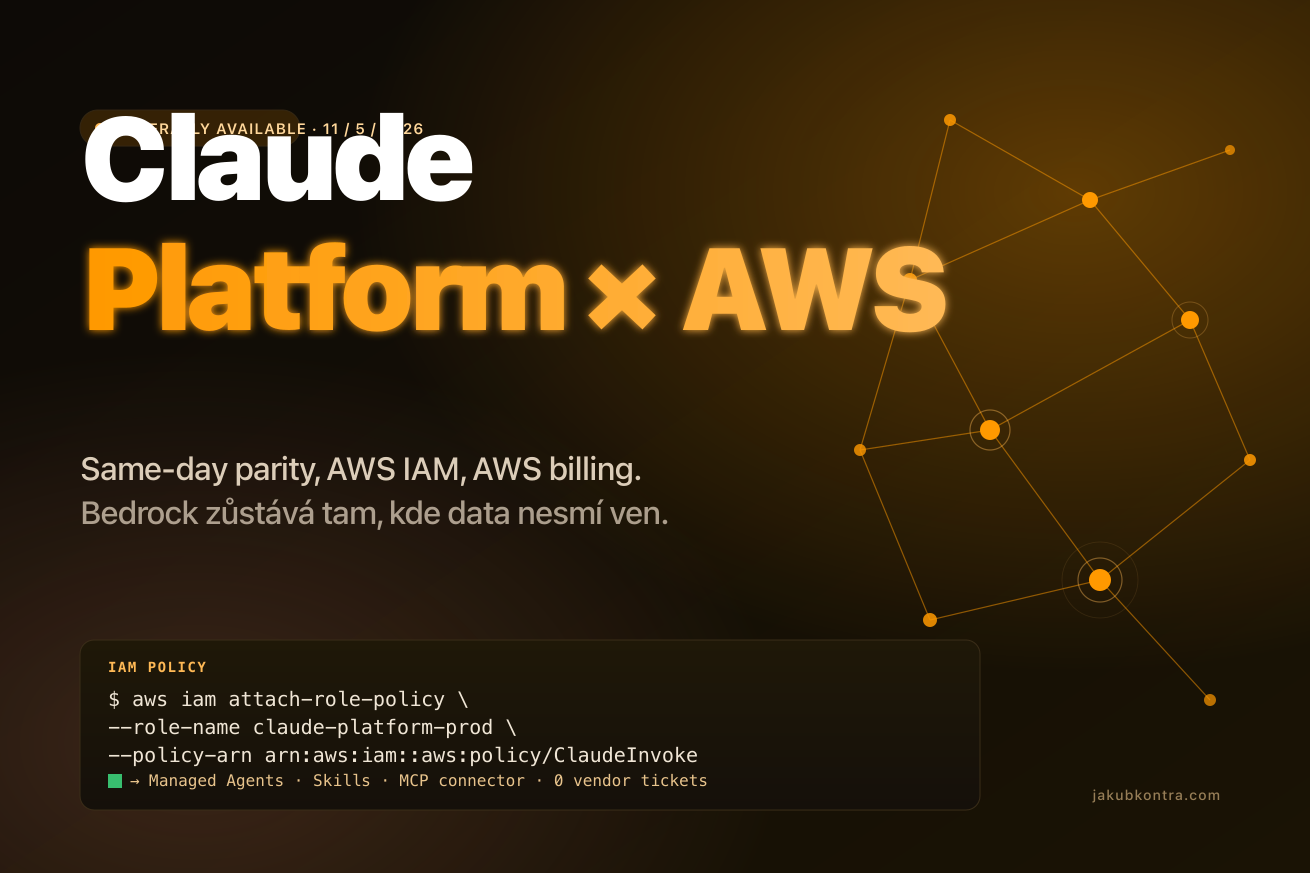

Anthropic announced GA of Claude Platform on AWS. Skills, Managed Agents, Advisor pattern and MCP connector land in your AWS account the same day Anthropic ships them, not weeks behind Bedrock. Bedrock isn't going anywhere, but it's no longer the only path to get Claude into a product through a corporate AWS account.

What Anthropic shipped

Claude Platform on AWS is a new way to consume the Claude API. Auth runs through AWS IAM, which removes the separate Anthropic account, the API key in Secrets Manager, and the second vendor in your compliance review. The audit trail lands in CloudTrail, and your security team gets model calls in the same console they already know. The invoice falls into consolidated AWS billing.

One detail gets lost in the marketing posts. Anthropic operates the service itself and remains the data processor. Inference runs in Anthropic infrastructure, so data doesn't necessarily stay inside your AWS region the way it does with Bedrock. It's not "data flowing somewhere off" in any dramatic sense, but control over the locality of inference sits with Anthropic, not with you. For most workloads that's fine. For some regulated scenarios it isn't.

Pricing matches the direct Claude API. No AWS markup, no discount for routing the bill through Amazon. The economics of the model don't change, only who you send the money to.

What it actually unlocks

Managed Agents are a hosted runtime for an agent. Anthropic runs the full agent loop, tool execution and sandbox. You define the model, system prompt, set of tools, MCP servers and skills, create the agent once, and then reference it by ID across sessions. The custom orchestrator in Lambda or ECS that exists only to keep context between tool calls disappears.

Advisor pattern is a way to stop overpaying for Opus where Sonnet is enough. A cheap executor runs most of the conversation, and the moment it hits a decision that needs deeper reasoning, it calls Opus as an advisor. It isn't a guaranteed saving with proven ROI; it depends on how often your workflow hits the "hard" moments. As a pattern, though, it's worth trying any time your monthly Opus bill starts scaling linearly with call volume.

Skills are modular bundles of SKILL.md plus scripts and templates. Anthropic ships pre-built skills for PowerPoint, Excel, Word and PDF. Instead of writing your own layer on top of python-pptx or openpyxl, you use an existing skill and the model already knows how to use it.

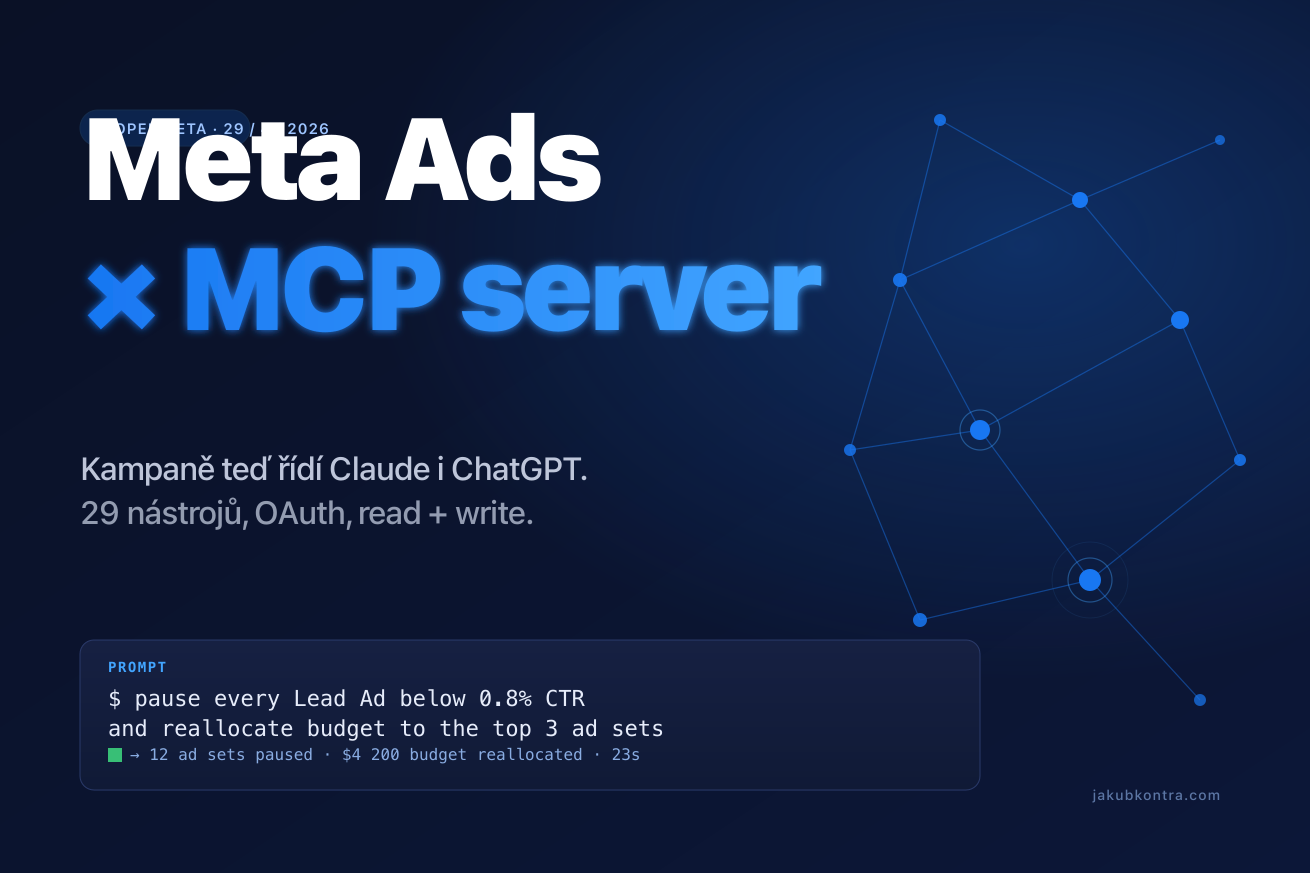

MCP connector connects to remote MCP servers directly from the Messages API, so you don't need your own MCP client running alongside the application. MCP Tool Search helps here, which according to Anthropic's own benchmarks reduces context consumption of MCP tools by up to 85%. The number will fluctuate in practice depending on what your tool definitions look like, but the direction is clear: a broader ecosystem of MCP servers without burning the context window.

On top of that you get code execution in a sandboxed Python environment, where the model runs code mid-conversation, and server-side web search with cited results. Your own sandbox runtime, search wrapper and MCP client shrink into a single API in this combination.

IAM, CloudTrail, AWS commitments

Dollars spent on Claude Platform on AWS count against your AWS commitments (commitment retirement). If you have an enterprise discount signed with AWS or some other prepaid commitment, AI utility doesn't add a new vendor spend, it just draws against what you're already paying for.

An IAM role means you manage access to the model with the same policies as access to an S3 bucket. CloudTrail logs every call. The second onboarding goes away, the second SOC 2 review goes away, and so does explaining to legal why another key for another SaaS just landed in Secrets Manager.

When to stay on Bedrock

Bedrock solves this the other way around: AWS is the data processor there, inference doesn't leave the region, and the regulator doesn't have to ask where the data is flowing. Banks and healthcare, public sector, anything under strict data residency — that's where Bedrock makes sense precisely for what Platform on AWS doesn't do. Platform on AWS takes most of the cases, Bedrock covers the projects where data residency inside an AWS region is a hard requirement.

What I'd do with it next sprint

Instead of three mandatory steps, one concrete one worth a first afternoon. Create an IAM role with a narrow policy for Claude Platform and run one existing prototype through it. The specific action namespace is in the service documentation.

Then watch the event source in CloudTrail that corresponds to Claude Platform and look at what gets logged and what doesn't. Expect the standard AWS pattern: call metadata (who, when, which model, which role) in CloudTrail, prompt and response content handled by the application layer. Verify it against the docs for your release though, a new service sometimes differs from Bedrock here. If you need content-level logging for an internal audit of conversations or PII review, you have to handle it in the application. CloudTrail won't do it for you.

If you walk this through for one prototype, you get the answer to two questions at once: whether Claude Platform fits the existing IAM and audit model, and whether you'll have to add your own content logging layer before going to production.